AI/ML

Google I/O 2025: AI Takes Center Stage in a Future-Forward Showcase

Google I/O 2025 showcased a future deeply interwoven with artificial intelligence, with the company’s Gemini family of AI models taking center stage. Enhancements like native audio outputs and a “Deep Think” reasoning mode for Gemini 2.5 Pro underscored a significant leap in AI capability, forming the backbone of numerous new features across Google’s ecosystem.

The tech world held its breath this week as Google hosted its annual I/O developer conference, and the 2025 edition delivered a firehose of announcements. Held at the Shoreline Amphitheatre in Mountain View, California, the event buzzed with anticipation, and Google delivered a keynote packed with groundbreaking updates, reinforcing its commitment to artificial intelligence as the driving force behind its innovations across nearly every product and service.

This year’s I/O felt distinctly focused on weaving AI deeper into the fabric of our digital lives. From revolutionary updates to existing services to the unveiling of entirely new AI models and tools, the message was clear: Google is betting big on a future powered by intelligent systems.

The Highlight: Project Chimera & The Gemini Ascendancy

While Google didn’t explicitly name one single “Project Chimera” in the keynote, the overarching theme clearly pointed towards a unified and significantly more powerful AI ecosystem, primarily driven by advancements in its Gemini family of models. The keynote emphasized making Gemini more capable, accessible, and integrated. This vision of a seamlessly interconnected AI layer, understanding and processing information across text, images, audio, video, and code, was the undeniable highlight.

The evolution of Gemini was showcased through several key announcements:

- Updates to Gemini 2.5 Flash and Pro models: These workhorse models received significant boosts, including native audio outputs for more natural and direct voice interactions, moving beyond text-to-speech.

- “Deep Think” reasoning mode in Gemini 2.5 Pro: This new capability allows Gemini 2.5 Pro to engage in more complex, multi-step reasoning and problem-solving, tackling more challenging queries.

These core model enhancements underpin many of the specific product updates and new features announced.

Key Announcements from the Google I/O 2025 Keynote:

Google unleashed a torrent of AI-centric updates. Here’s a breakdown of the major reveals:

- Revolutionizing Search and Information Access:

- Expanded access to AI Overviews: More users will now see AI-generated summaries at the top of their search results, providing quick answers to complex queries.

- Search Live in AI Mode and Lens: This signifies a more conversational and interactive search experience. Imagine asking follow-up questions and getting refined results in real-time. Google Lens also sees deeper AI integration for understanding and interacting with the visual world.

- Gemini in Google Chrome: The browser will natively integrate Gemini, offering contextual assistance, summarization, and potentially content generation capabilities directly within Chrome.

- Enhancing Productivity and Communication:

- Personalised smart replies in Gmail: Moving beyond generic suggestions, Gmail’s smart replies will become more attuned to your individual writing style and context.

- Speech translation in Meet: Real-time speech translation in Google Meet will break down language barriers in global communications.

- Expanded access to Gemini Live’s camera and screen sharing capabilities: Making Gemini more interactive, allowing it to “see” what the user sees via camera or screen share for more contextual help.

- Next-Generation Generative AI Tools:

- Imagen 4: The next iteration of Google’s text-to-image model promises even higher fidelity, better prompt understanding, and more creative control over image generation.

- Veo 3: Google’s answer to generative video, Veo 3, showcased impressive capabilities in creating high-quality video clips from text prompts.

- Flow, a new AI filmmaking tool: This tool aims to assist creators in the filmmaking process, potentially from scriptwriting to storyboarding and even scene generation, powered by AI.

- Synth ID detector: Addressing concerns about AI-generated content, Google is improving its Synth ID technology to better detect and watermark AI-created media.

- AI in E-commerce and User Experience:

- Shopping in AI Mode: This will transform the online shopping experience, with AI guiding users through product discovery and decision-making.

- Agentic checkout: Imagine an AI agent that can handle the checkout process across different sites, simplifying online purchases.

- Virtual try-on tool: Enhancing online apparel shopping, this tool will use AI to allow users to virtually see how clothes might fit.

- Tools for Developers and the AI Ecosystem:

- Jules, an asynchronous AI coding agent: This AI assistant is designed to help developers write, debug, and manage code more efficiently, working alongside them.

- Support for Anthropic’s Model Context Protocol (MCP): Signifying a move towards greater interoperability, Google will support this protocol, potentially allowing for easier integration and use of different large language models.

- New updates to Deep Research and Canvas: These tools, likely aimed at researchers and creatives, will receive further AI enhancements to boost their capabilities.

- New Platforms and Subscription Tiers:

- Beam, a 3D AI-first video communication platform: Promising a more immersive and interactive way to communicate, Beam leverages AI to create novel 3D video experiences.

- Google AI Pro and AI Ultra subscription plans: To provide access to its most powerful models and features, Google is introducing new premium subscription tiers for its AI services.

- Glimpse into the Future: Hardware and OS

While the keynote was heavily software and AI-focused, there were nods to upcoming hardware and platform developments:

- A look at Android XR-powered smart glasses: Google provided a sneak peek at its vision for augmented reality, showcasing potential smart glasses powered by an XR-focused version of Android. This signals continued investment in immersive computing.

- Pixel 10 Series and Tensor G5 Chip (Expected): While not explicitly detailed in the provided keynote list, it’s highly anticipated that flagship hardware like the Pixel 10 series, powered by a new Tensor G5 chip optimized for these advanced AI models, will be formally detailed later in I/O or in the coming months. These on-device capabilities are crucial for realizing the full potential of the announced AI features.

- Pixel Fold 2, Wear OS 6.0, Pixel Watch 3, and Android 16 (Expected): Similarly, updates to the foldable line, wearables, and the next version of Android (Android 16) are expected to be deeply intertwined with the Gemini advancements and the Chimera-like ecosystem, focusing on privacy, AI integration, and enhanced user experiences.

Looking Ahead:

Google I/O 2025 painted a compelling picture of a future where AI is deeply embedded in nearly every digital interaction. The sheer volume of announcements centered around Gemini and its applications underscores Google’s strategy to lead in the AI era. From making search more intuitive and communication more seamless to providing powerful new creative and developer tools, Google is pushing the boundaries of what AI can achieve.

The introduction of subscription plans like AI Pro and AI Ultra also signals a new phase in how Google plans to monetize its cutting-edge AI capabilities. As these tools and features roll out, the focus will be on user adoption, ethical considerations, and the real-world impact of this AI-driven transformation.

Stay tuned to our website for more in-depth coverage and analysis of all the Google I/O 2025 announcements and hardware deep dives as more information becomes available.

AI/ML

xAI Restructuring Leads to Major Co-Founder Departures

Major Restructuring at xAI Sparks Co-Founder Exodus

Estimated Reading Time: 5 minutes

Key Takeaways

- Elon Musk’s xAI restructured, leading to the exit of six co-founders and over ten engineers.

- Notable departures include co-founders Tony Wu and Jimmy Ba.

- Musk reorganized xAI into four main product teams focused on AI efficiency.

- The restructuring raises questions about the company’s organizational stability and innovation potential.

- Upcoming products such as the standalone XChat app and X Money are anticipated.

Context / Background

xAI was founded by Musk to focus on advanced AI technologies. Following its recent merger with SpaceX, the company took steps aimed at enhancing productivity and ensuring that it could keep pace with the rapidly evolving AI landscape. The restructuring was officially announced just days before an all-hands meeting held on February 10, 2026, which marked the first such meeting since the merger.

Key Details

The wave of departures included prominent figures such as Tony Wu, who announced his resignation via X on February 9, stating it was “time for my next chapter.” Co-founder Jimmy Ba followed suit during the all-hands meeting, where he thanked Musk and made a bold prediction of achieving “100x productivity” in AI within a year. Other co-founders who exited included Hang Gao, Roland Gavrilescu, and Chace Lee, with plans to start new AI ventures comprising smaller teams.

This restructuring resulted in a dramatic reduction of xAI’s founding team, with only six of the original twelve members remaining. Additionally, more than ten engineers publicly departed in the same week, further indicating a shift within the company. Despite these exits, xAI retains more than 1,000 employees and continues to hire aggressively, signaling an important push for growth.

In terms of organizational changes, Musk reorganized xAI into four primary product teams: Grok, Grok Voice, Grok Code, and Grok Imagine, along with a team focused on Macrohard, which aims to automate white-collar work utilizing Grok-powered multi-agent systems. Musk emphasized that these changes were necessary to improve the speed of execution as the company evolves. He stated that some individuals were “better suited for early stages” of development and less so for later stages, which justified the need to “part ways” with specific team members.

Impact

The departures could have ramifications for xAI’s capabilities and innovation, especially given the ongoing competition with AI leaders such as OpenAI, Anthropic, and Google. The restructuring has triggered discussions about employee retention in an industry rife with rapid advances and significant talent poaching.

Furthermore, the controversy surrounding xAI is compounded by ongoing regulatory scrutiny. Notably, French authorities raided X offices in relation to concerns over the potential misuse of Grok technologies, particularly in generating non-consensual deepfakes, which could reflect deeper issues regarding ethical AI deployment and corporate governance.

For users and stakeholders, the rapid changes signal an early push towards a more structured product development path at xAI. However, it raises questions about organizational stability and the firm’s ability to innovate amid the exits of experienced personnel.

What’s Next

As xAI forges ahead, the company is poised for significant developments, especially with Musk’s ambitious visions laid out during the all-hands meeting. These include the forthcoming standalone XChat app for messaging and video communication, along with X Money, an application designed for global financial transactions that is currently in a closed beta phase. With the anticipated IPO in 2026, the structural changes could ultimately play a crucial role in how well xAI responds to market demands and regulatory challenges in the coming years.

FAQ Section

What happened to the xAI co-founders?

Six out of the twelve original co-founders left xAI due to a significant restructuring aimed at improving efficiency after the company’s merger with SpaceX.

Who are the departed co-founders?

The departed co-founders include Tony Wu, Jimmy Ba, Hang Gao, Roland Gavrilescu, and Chace Lee.

Why did they leave?

They expressed the need for new ventures and aspirations, and Musk indicated that some were better suited for earlier stages of development.

What are the organizational changes at xAI?

xAI has been reorganized into four primary product teams: Grok, Grok Voice, Grok Code, and Grok Imagine, along with a focus on Macrohard for automating white-collar work.

How will this affect xAI?

The restructuring could impact xAI’s innovation capabilities and its ability to retain talent amidst fierce competition in the AI industry.

AI/ML

India Pursues Global AI Commons at Summit in New Delhi

India to Push for Global AI Commons at AI Impact Summit in New Delhi

- India aims to establish a “global AI commons” at the AI Impact Summit on February 19-20, 2026.

- Focus on AI’s potential to drive social impact in health, education, and agriculture.

- Collaboration among nations to share resources and technologies rather than just purchasing them is emphasized.

- 12 projects are currently funded to enhance India’s AI capabilities.

- The summit will guide discussions around global collaboration in AI technologies.

Main Content

Context / Background

Key Details

Impact

What’s Next

FAQ Section

What is the AI Impact Summit?

When will the AI Impact Summit take place?

What is the goal of establishing a “global AI commons”?

What role does India aim to play in AI advancements?

AI/ML

Adobe unveils Firefly Foundry to build IP-safe generative AI models for studios

Adobe unveils Firefly Foundry to build IP-safe generative AI models for studios

Adobe is expanding its Firefly AI ecosystem with a new offering called Firefly Foundry, pitched as a way for entertainment and media companies to use generative AI without risking third-party intellectual property violations. Timed with this year’s Sundance Film Festival, the initiative focuses on “private, IP-safe” omni-models built and trained specifically for individual clients such as studios, streamers, and talent agencies. (The Verge, Jan 22, 2026)theverge+1

Firefly Foundry differs from many mainstream generative AI models by restricting its training data to content that the client already owns or has rights to use. Instead of drawing on massive internet-scale datasets, Adobe’s engineers work with partners to build bespoke models that learn from studio libraries, brand assets, and franchise materials under clear licensing controls. The company says this approach is meant to enhance creative workflows while protecting ownership and artistic intent across the production pipeline. (The Verge, Jan 22, 2026)business.adobe+1

“This approach is meant to enhance creative workflows while protecting ownership and artistic intent across the production pipeline.”

The new models are designed to support a range of production tasks, from early concepting to final post-production. Adobe highlights use cases such as generating audio-aware video clips, 3D elements, and vector graphics that can drop into existing timelines and project files in applications like Premiere Pro and other Creative Cloud tools. By keeping everything inside a controlled, rights-cleared environment, studios gain the speed and flexibility of generative AI while maintaining stricter guardrails on how their IP is used and extended. (The Verge, Jan 22, 2026)letsdatascience+1

Firefly Foundry grew out of previous enterprise engagements where Adobe offered less customizable Firefly models trained on licensed stock and public domain material. Those earlier systems could reliably produce static images but struggled to reflect the visual language and narrative worlds of specific franchises. Executives say clients increasingly asked for models that truly understood their universes and characters, leading Adobe to develop a service that can be tuned deeply on proprietary catalogs while still following its established principles around responsible AI. (The Verge, Jan 22, 2026)theverge+1

For Hollywood, where legal exposure and brand control are constant concerns, the promise of IP-safe AI arrives at a sensitive moment. Recent industry labor disputes and ongoing debates over synthetic performers, AI-written scripts, and digital doubles have sharpened scrutiny of how training data is sourced and how credits and compensation are handled. By framing Firefly Foundry as a tool that stays within the boundaries of owned IP, Adobe is signaling that studios can modernize their pipelines without crossing current legal and ethical red lines. (The Verge, Jan 22, 2026)letsdatascience+1

Hannah Elsakr, Adobe’s vice president of generative AI new business ventures, has positioned the service as a natural step for large media companies already reliant on Adobe tools. She notes that enterprises have been asking Adobe not just for AI features, but for partnership on governance, safety, and long-term integration of generative systems into creative work. With Firefly Foundry, Adobe is betting that its track record with Photoshop, Premiere Pro, and other staples will help it become a default AI partner for the entertainment industry’s next phase of digital production. (The Verge, Jan 22, 2026)techzine+1

The move also reinforces Adobe’s broader strategy around content provenance and accountability. Previous Firefly products incorporated content credentials to document how AI-generated media was created, a feature that can support both transparency for audiences and auditability for rights holders. Extending that philosophy into customized, IP-bound models may give studios a clearer chain of custody for AI-assisted assets, an attractive prospect as regulators and industry bodies continue to refine standards around synthetic content. (The Verge, Sept 13, 2023; Jan 22, 2026)theverge+1

Looking ahead, Firefly Foundry positions Adobe in direct competition with newer AI startups offering tailored models for brands and media clients. However, Adobe’s deep integration with existing post-production and design workflows could prove a significant advantage, allowing editors, VFX teams, and marketers to experiment with generative tools inside familiar environments. If the service delivers on its IP-safe promise, it may help reshape how films, series, and campaigns are developed, with generative AI embedded across every stage but still operating within carefully negotiated rights frameworks. (The Verge, Jan 22, 2026)forbes+1

- Why it_Matters :

- Offers studios a way to deploy generative AI trained only on rights-owned assets, potentially lowering legal risk around IP use.business.adobe+1

- Integrates with Adobe’s existing creative suite, making AI-assisted production easier to adopt for established teams and workflows.theverge+1

- Aligns with growing demands for provenance, transparency, and responsible AI in synthetic media and entertainment content.computerworld+1

-

Entertainment10 months ago

Entertainment10 months agoSquid Game Season 3 Trailer Teases a Brutal Finale: Gi-hun Returns for One Last Game

-

Fashion9 years ago

These ’90s fashion trends are making a comeback in 2017

-

Business9 years ago

The 9 worst mistakes you can ever make at work

-

Science6 months ago

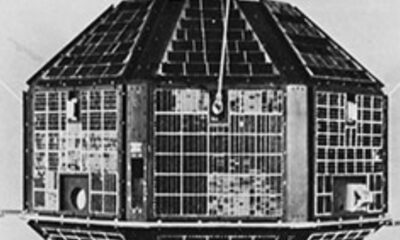

Science6 months agoAryabhata: India’s First Satellite That Sparked a Space Revolution

-

AI/ML2 months ago

AI/ML2 months agoAdobe unveils Firefly Foundry to build IP-safe generative AI models for studios

-

Fashion9 years ago

According to Dior Couture, this taboo fashion accessory is back

-

Science10 months ago

Science10 months agoVera C. Rubin Observatory Unveils First-Ever 3,200-Megapixel Images

-

Sports9 years ago

Phillies’ Aaron Altherr makes mind-boggling barehanded play